Dr Hammond Pearce is exploring how generative technologies are secured and what can be trusted.

Long before large language models (LLMs) or AI safety debates entered the public conversation, UNSW School of Computer Science and Engineering’s Dr Hammond Pearce’s interest in computing was grounded in the physical world.

“I got started playing with automation, controllers, microcontrollers and things that dad had just brought home. I was always really interested in robotics,” Hammond said.

“I very much describe myself as a computer engineer, not a computer scientist. I mostly focus on the building of things – then on top of that, I’m interested in AI and security.”

Hammond’s early interest in robotics, a stint at NASA and years of research in hardware security laid the foundation for his work in AI. When LLMs like GPT-2 began to emerge around 2020, Hammond saw an opportunity and began testing whether these models could be used for hardware design.

Engineers usually describe what they want a digital system to do in plain English, before translating those ideas into specialised code that computers can understand. Hammond’s research asked whether AI could automate that translation – a direction that has since gained traction but was unconventional at the time.

“We did the very first study asking, can we use these newfangled, large language models to make hardware? And it sort of worked,” he said.

In testing, the model produced correct code for almost 95% of tasks in the evaluation set. However, the experiment, detailed in the paper DAVE: Deriving Automatically Verilog from English, was dismissed as an interesting gimmick to some in the tech community. But for Hammond, it marked a turning point.

“It was the first time any tech magazine had ever written about my work, even though they savaged it. I thought, this is fantastic, I'm doing stuff people are reading about.”

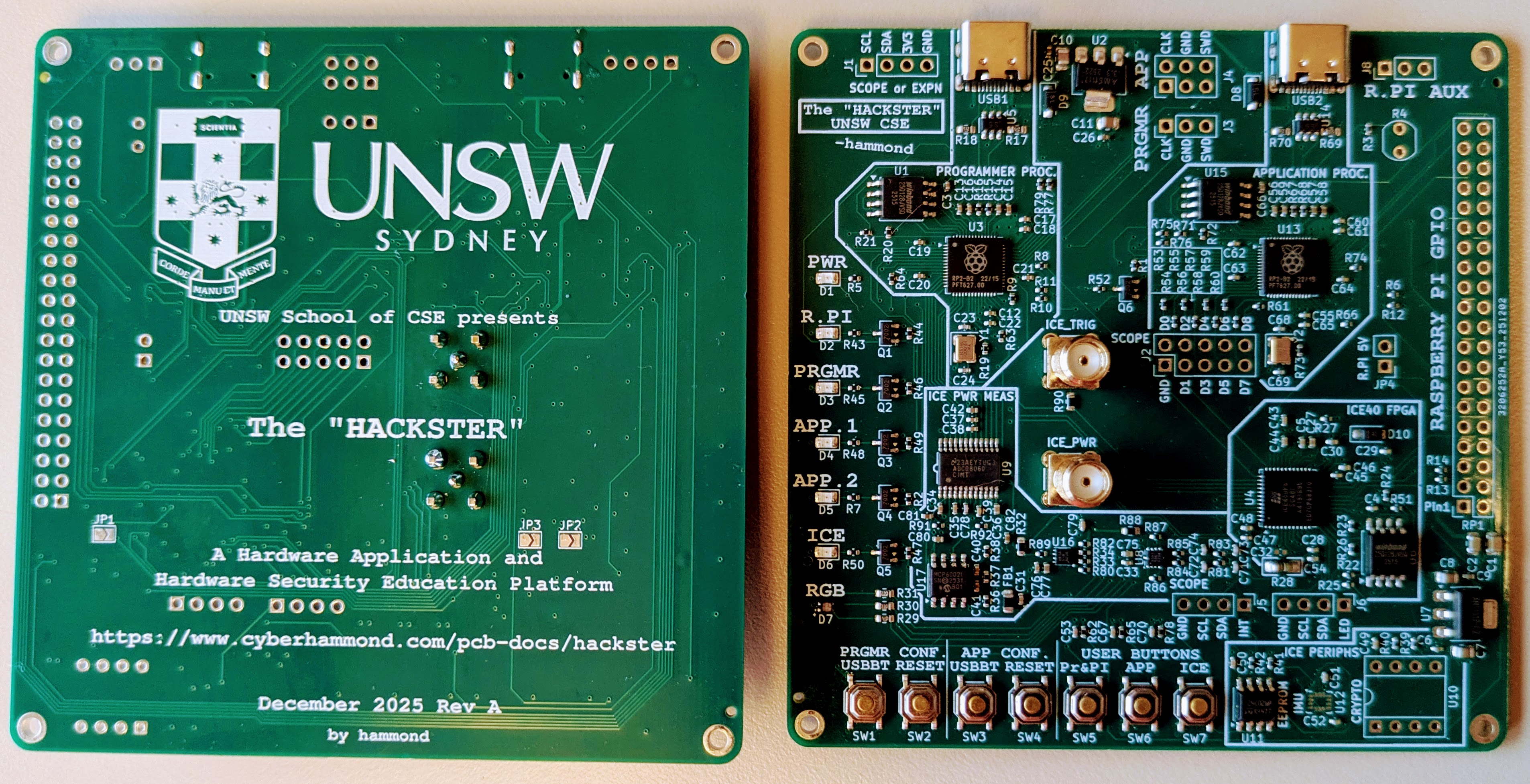

Testing circuit boards

For most of his career, Hammond has focused on understanding physical computer systems and how to keep them safe.

“When we teach hardware security, you want to teach people how to carry out attacks, and then to defend against those attacks,” he said.

To do this, he builds low-cost circuit boards and physical training platforms that allow students to hack hardware and study the results.

“This provides a training test bed for a number of different attacks on physical hardware. I want everything we teach to be able to be done in reality.”

He wants both students and AI to be able to design and secure hardware. For now, at least, AI is worse. Hammond is candid about why he thinks why this is. He says that in this domain there’s less training data available and errors only become visible once a physical device has been built.

“There are just considerably fewer things for the AI to learn from. They [LLMs] can do things, but quite often they make mistakes and if you don't know what you're doing, even me, I'll get trapped. I’ve made mistakes in designs and then made the physical artifact and things don’t work,” he said. “Hardware doesn't tend to have instant feedback loops like you can get with software.”

From hardware to AI security

Hammond’s extensive experience in hardware systems and security shaped how he approached AI as generative models became more capable.

“After it first came out, we started looking at the security aspects of the code that was being generated by the early GitHub Copilot [AI platform]. We found that it was pretty bad,” he said.

The resulting paper on this study, Asleep at the Keyboard, was among the first to spotlight AI-generated code’s security vulnerabilities, leading to further research.

“The three papers that we did there, Asleep at the Keyboard, Examining Zero Shot Vulnerability Repair and then Lost at C, really defined a lot of the early work in looking at the security implications of these models.”

And then to misinformation

Later, with the rise of conversational AI, Hammond turned his attention to misinformation.

“It was pretty obvious that these [LLMs] were going to be used to create spam bots,” he said. “They might be bad at generating hardware, but they’re great at generating short texts.”

In 2024, Hammond began a side project to investigate this growing threat. The result was Capture the Narrative, a world-first social media game which allowed students to build AI bots to influence a fictional election. The results were revealing.

“The students could use AI to generate a huge amount of fake content and fake news, but they weren’t that good at spotting it.”

Hammond’s team plans to further develop the game this year, adding new features to encourage spam detection.

The findings underscore a double-edged reality. Hammond believes generative AI will reshape how information is perceived online, while making powerful tools of influence more accessible than ever.

“Students working in their dorm room with just laptops and a budget of like $3 can build influence campaigns that are every bit as powerful as what governments would have done 10 years ago.”

Read more about Hammond’s work on Capture the Narrative on the UNSW Newsroom.

- Log in to post comments